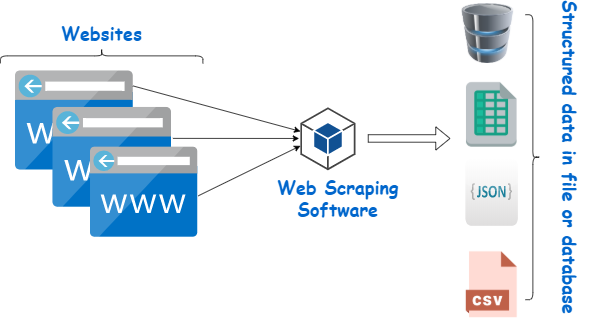

Web scraping web harvesting, or web data extraction is data scraping used for extracting data from websites. The web scraping software may directly access the World Wide Web using the Hypertext Transfer Protocol or a web browser.

While this can be done manually by a software user, the term typically refers to automated processes implemented using a bot or web crawler. It is a form of copying in which specific data is gathered and copied from the web, typically into a central local database or spreadsheet, for later retrieval or analysis.

Web scraping a web page involves fetching it and extracting from it. Fetching is the downloading of a page (which a browser does when a user views a page). Therefore, web crawling is a main component of web scraping, to fetch pages for later processing. Once fetched, then extraction can take place. The content of a page may be parsed, searched, reformatted, its data copied into a spreadsheet or loaded into a database. Web scrapers typically take something out of a page, to make use of it for another purpose somewhere else. An example would be to find and copy names and telephone numbers, or companies and their URLs, or e-mail addresses to a list (contact scraping).

It is used for contact scraping, and as a component of applications used for web indexing, web mining and data mining, online price change monitoring and price comparison, product review scraping (to watch the competition), gathering real estate listings, weather data monitoring, website change detection, research, tracking online presence and reputation, web mashup, and web data integration.

Why do we need web scraping?

This is integral to the process because it allows quick and efficient extraction of data in the form of news from different sources. Such data can then be processed in order to glean insights as required. As a result, it also makes it possible to keep track of the brand and reputation of a company.

What are advantages of web scraping?

- Cost-Effective. These services provide an essential service at a competitive cost.

- Low Maintenance and Speed. It does have a very low maintenance cost associated with it over a while.

- Data Accuracy. Simple errors in data extraction can lead to major issues.

- Easy to Implement.

What are disadvantages of web scraping?

- Data Analysis of Data Retrieved through Scraping the Web. To analyze the retrieved data, it needs to be treated first.

- Difficult to Analyze. For those who are not much tech-savvy and aren’t an expert, web scrapers can be confusing.

- Speed and Protection Policies.

What are the main problems in implementing web scraping?

- Bot access. The first thing to check is that if your target website allows for scraping before you start it.

- Complicated and changeable web page structures.

- IP blocking.

- CAPTCHA.

- Honeypot traps.

- Slow/unstable load speed.

- Dynamic content.

- Login requirement.

What are the ethics of web scraping?

I will always provide a User Agent string that makes my intentions clear and provides a way for you to contact me with questions or concerns. I will request data at a reasonable rate. This will strive to never confused for a DDoS attack. I will only save the data I absolutely need from your page.

Is web scraping a crime?

This is actually not illegal on its own but one should be ethical while doing it. If done in a good way, it can help us to make the best use of the web, the biggest example of which is Google Search Engine.

Is web scraping a good idea?

With this, you can easily build a competitive pricing strategy by collecting data on industry-standard prices, competitor prices and the price consumers are willing to pay for your product based on their reviews and comments.

How do you scrape ethically?

- The API way is often the best way. Some websites have their own APIs built specifically for you to gather data without having to scrape it.

- Respect the robots. txt.

- Read the Terms and Conditions.

- Be gentle.

- Identify yourself.

- Ask for permission.

- References.

When should you use web scraping?

It is integral to the process because it allows quick and efficient extraction of data in the form of news from different sources. Such data can then be process in order to glean insights as required. As a result, it also makes it possible to keep track of the brand and reputation of a company.